Aims of this seminar🔗

- Introduce Chalmers e-Commons/C3SE and NAISS

- Our HPC systems and the basic workflow for using them

- various useful tools and methods

- how we can help you

- This presentation is available on C3SE's web page:

Chalmers e-Commons🔗

- C3SE is part of Chalmers e-Commons managing the HPC resources at Chalmers

- located in south section of Origo building, 6th floor. Map

- Infrastructure team consists of:

- Sverker Holmgren (director of e-Commons)

- Thomas Svedberg

- Mikael Öhman

- Viktor Rehnberg (Application Expert AI/ML)

- Chia-Jung Hsu (Application Expert HPC)

- Yunqi Shao (Application Expert HPC)

- Dejan Vitlacil

- Leonard Nielsen (PhD student)

NAISS🔗

- We are part of NAISS, National Academic Infrastructure for Supercomputing in Sweden, a government-funded organisation for academic HPC

- NAISS replaced SNIC starting 2023.

- Funded by NAISS and Chalmers

- 5 other NAISS centres

- Lunarc in Lund

- NSC, National Supercomputing Centre, in Linköping

- PDC, ParallellDatorCentrum, at KTH in Stockholm

- Uppmax in Uppsala

- HPC2N, High Performance Computing Centre North, in Umeå

- Much infrastructure is shared between centres - for example, our file backups go to Umeå and C3SE runs SUPR.

- Similar software stack is used on other centres: Module system, scheduler, containers.

Compute clusters at C3SE🔗

- We primarily run Linux-based compute clusters

- We have two production systems: Alvis and Vera

Compute clusters at C3SE🔗

- We run Rocky Linux 8, which is a clone of Red Hat Enterprise Linux

- Rocky is not Ubuntu!

- Users do NOT have

sudorights! - You can't install software using

apt-get!

Our systems: Vera🔗

- Not part of NAISS, only for C3SE members.

- Vera hardware

- 96 GB RAM per node standard

- Nodes with 192, 384, 512, 768, 1024, 2048 GB memory also available (some are private)

- 230 Intel Xeon Gold 6130 ("Skylake") 16 cores @ 2.10 GHz (2 per node)

- +2 nodes with 2 NVidia V100 accelerator cards each.

- +2 nodes with 1 NVidia T4 accelerator card each.

- 25 Intel(R) Xeon(R) Gold 6338 ("Icelake") 32 cores @ 2.00GHz (2 per node)

- +4 nodes with 4 Nvidia A40 cards each

- +3 nodes with 4 Nvidia A100 cards each

- Skylake login nodes are equipped with graphics cards.

Vera hardware details🔗

- Main partition has

| #GPUs | GPUs | FP16 TFLOP/s | FP32 | FP64 | Capability |

|---|---|---|---|---|---|

| 4 | V100 | 31.3 | 15.7 | 7.8 | 7.0 |

| 3 | T4 | 65.1 | 8.1 | 0.25 | 7.5 |

| 16 | A40 | 37.4 | 37.4 | 0.58 | 8.6 |

| 12 | A100 | 77.9 | 19.5 | 9.7 | 8.0 |

| # nodes | type | ||||

| 215 | Skylake | ~4 | ~2 | (full 32 cores) | |

| 19 | Icelake | ~8 | ~4 | (full 64 cores) |

- Theoretical numbers!

Our systems: Alvis🔗

- NAISS resource dedicated to AI/ML research

- Consists of nodes accelerated with multiple Nvidia GPUs each

- Alvis went in production in three phases:

- Phase 1A: 44 V100 (Skylake CPUs)

- Phase 1B: 160 T4 (Skylake CPUs)

- Phase 2: 336 A100, 348 A40 (Ice lake CPUs)

- Login nodes:

alvis1has 4 T4 GPUs for testing, development (Skylake CPUs)alvis2has is primarily a data transfer node (Ice lake CPUs)

- Node details: https://www.c3se.chalmers.se/about/Alvis/

- You should apply for a project if you are working on AI/ML.

Our systems: Cephyr🔗

- Funded by NAISS and Chalmers.

- Center storage running CephFS

- Total storage area of 2PiB

- Also used for Swedish Science Cloud and dCache @ Swestore

- Details: https://www.c3se.chalmers.se/about/Cephyr/

- Will likely migrate to new, larger hardware in the near future

Our systems: Mimer🔗

- Funded by WASP via NAISS as part of Alvis.

- Center storage running WekaIO

- Very fast storage for Alvis.

- 634 TB flash storage

- 6860 TB bulk

- Details: https://www.c3se.chalmers.se/about/Mimer/

Available NAISS resources🔗

-

Senior researchers at Swedish universities are eligible to apply for NAISS projects on other centres; they may have more time or specialised hardware that suits you; e.g. GPU, large memory nodes, sensitive data.

-

We are also part of:

- Swedish Science Cloud (SSC)

- Swestore

-

You can find information about NAISS resources on https://supr.naiss.se and https://www.naiss.se

- Contact the support if you are unsure about what you should apply for.

Available local resources🔗

- All Chalmers departments are allocated a chunk of computing time on Vera

- Each department chooses how they want to manage their allocations

- Not all departments have chosen to request it

Getting access🔗

- https://supr.naiss.se is the platform we use for all resources

- To get access, you must do the following in SUPR:

- Join/apply for a project

- Accept the user agreement

- Send an account request for the resource you wish to use (only available after joining a project)

- Wait ~1 working day.

- Just having a CID is not enough!

- We will reactive CIDs or grant new a new CID if you don't have one.

- There are no cluster-specific passwords; you log in with your normal CID and password (or with a SSH-key if you choose to set one up).

- https://www.c3se.chalmers.se/documentation/getting_access

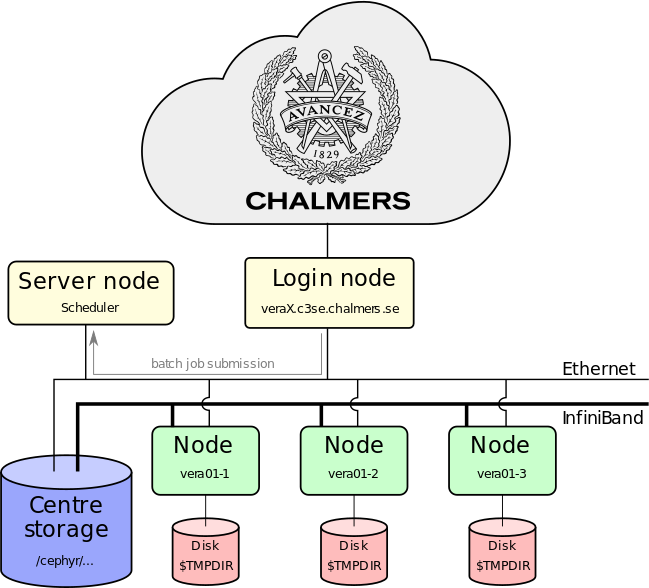

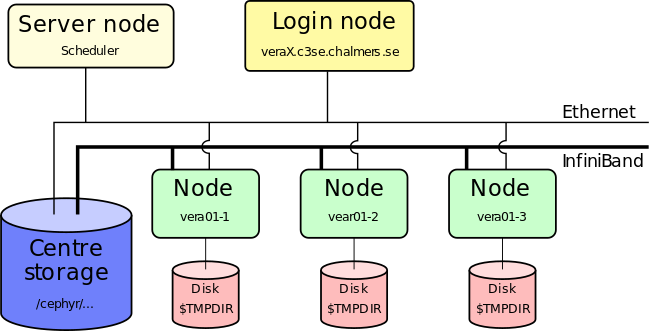

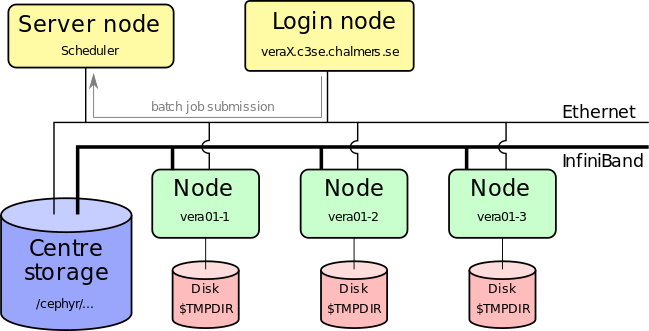

Working on an HPC cluster🔗

- On your workstation, you are the only user - on the cluster, there are many users at the same time

- You access the cluster through a login node - it's the only machine(s) in the cluster you can access directly

- There's a queuing system/scheduler which starts and stops jobs and balances demand for resources

- The scheduler will start your script on the compute nodes

- The compute nodes are mostly identical (except for memory and GPUs) and share storage systems and network

Working on an HPC cluster🔗

- Users belong to one or more projects, which have monthly allocations of core-hours (rolling 30-day window)

- Each project has a Principal Investigator (PI) who applies for an allocation

- The PI decides who can use their projects.

- All projects are managed through SUPR

- You need to have a PI and be part of a project to run jobs

- We count the core-hours your jobs use

- The core-hour usage for the past 720 hours influences your job's priority.

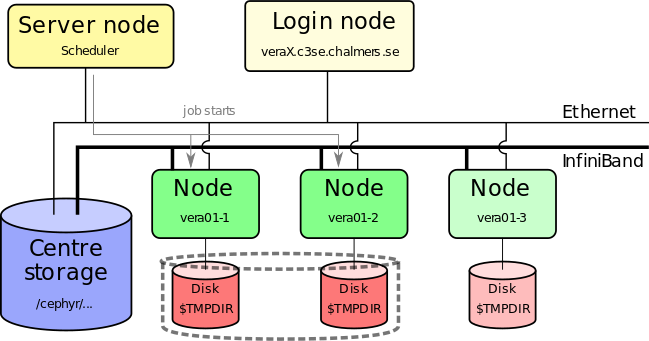

The compute cluster🔗

Preparing job🔗

- Install your own software (if needed)

- Login nodes are shared resources!

- Transfer input files to the cluster

- Prepare batch scripts for how perform your analysis

Submit job🔗

- Submit job script to queuing system (sbatch)

- You'll have to specify number of cores, wall-time, GPU/large memory

- Job is placed in a queue ordered by priority (influenced by usage, project size, job size)

Job starts🔗

- Job starts when requested nodes are available (and it is your turn)

- Automatic environment variables inform MPI how to run (which can run over Infiniband)

- Performs the actions you detailed in our job script as if you were typing them in yourself

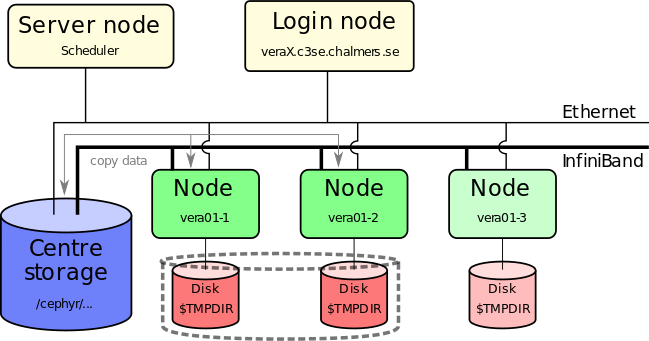

Copy data to TMPDIR🔗

- You must work on TMPDIR if you do a lot of file I/O

- Parallel TMPDIR is available (using

--gres ptmpdir:1) - TMPDIR is cleaned of data immediately when the job ends, fails, crashed or runs out of wall time

- You must copy important results back to your persistent storage

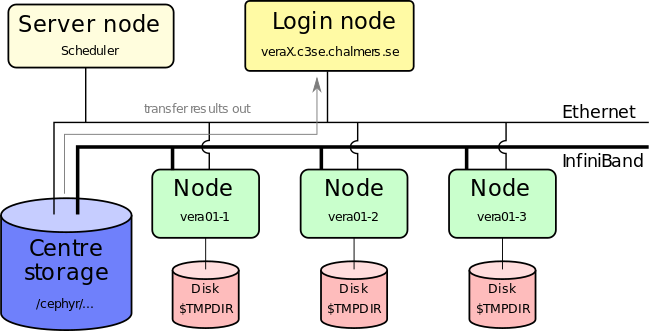

After the job ends🔗

- You can also post-process results directly on our systems

- Graphical pre/post-processing can be done via Thinlinc or the Vera portal.

Connecting via SSH🔗

- Use your CID as user name and log in with ssh:

- Vera:

ssh CID@vera1.c3se.chalmers.seorCID@vera2.c3se.chalmers.se(accessible within Chalmers and GU network) - Alvis:

ssh CID@alvis1.c3se.chalmers.seorCID@alvis2.c3se.chalmers.se(accessible from within SUNET) - Authenticate yourself with your password or set up aliases and ssh keys (strongly recommended for all)

- On Linux or Mac the ssh client is typically already installed. On windows we recommend WSL or Putty.

- WSL is a good approach if you want to build some Linux experience.

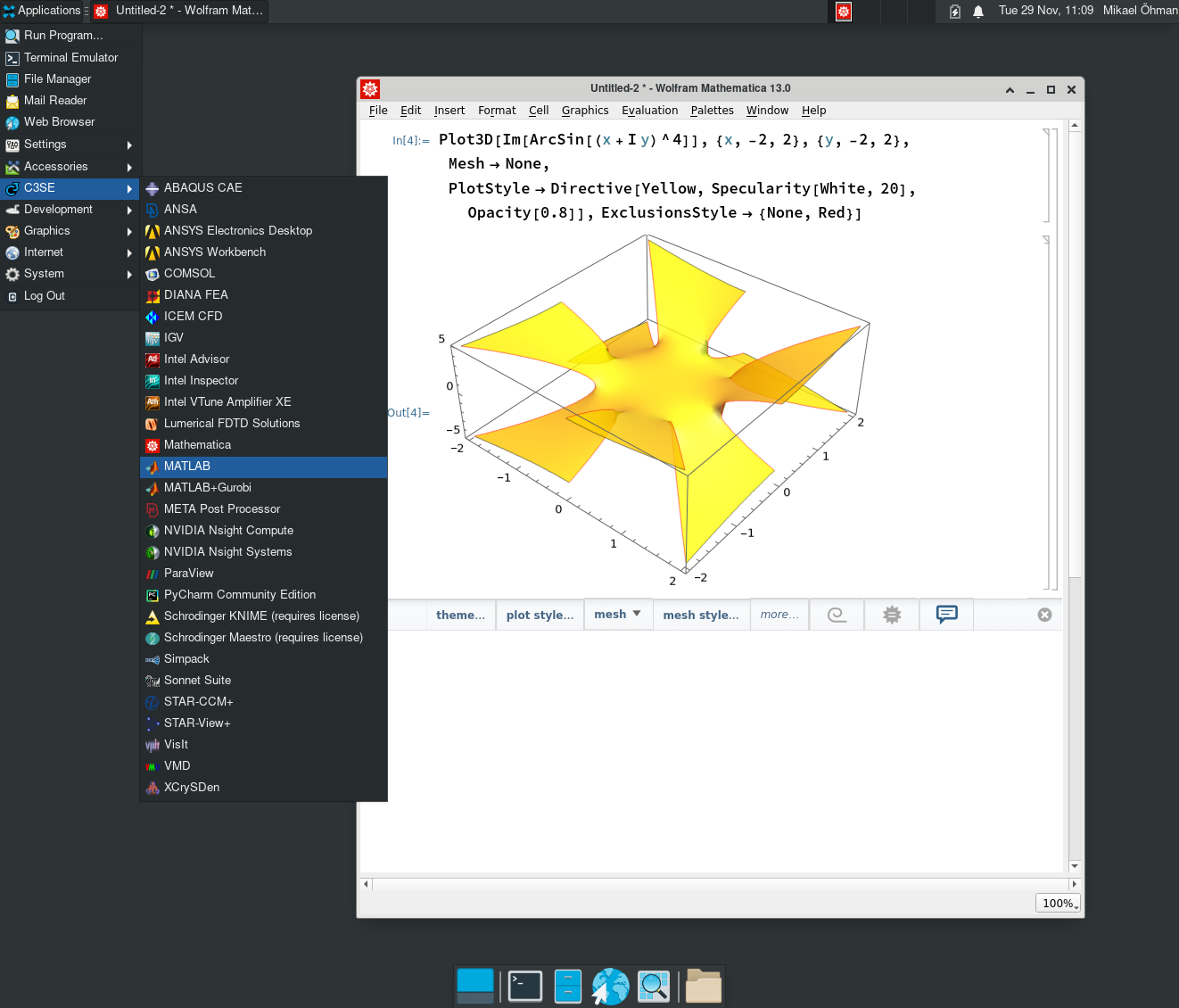

Connecting via Thinlinc🔗

- We also offer graphical login using ThinLinc, where you get a linux desktop on the cluster. This is mainly intended for graphics-intensive pre- and post processing. See https://www.c3se.chalmers.se/documentation/remote_graphics for more info.

- Using the Thinlinc client is recommende.

- Can also use the web:

- https://vera1.c3se.chalmers.se:300

- https://vera2.c3se.chalmers.se:300

- Still a shared login node!

Connecting via Open Ondemand🔗

- https://vera.c3se.chalmers.se

- Allows for easily launching interactive apps, including full desktop with all desktop apps, on compute nodes.

- Environments can be customized by copying the examples under

/apps/portal/to your home dir~/portal/. - Always prefer this to using

srun: this won't be affected by any restart of the login nodes or loss of internet connectivity.

Desktop🔗

* Similar environment in Thinlinc and Ondemand.

* Similar environment in Thinlinc and Ondemand.

VPN🔗

- Vera users need to set up Chalmers VPN (L2TP recommended) to connect from outside Chalmers or GU.

- Alvis is open to all of SUNET.

- This applies to all services: SSH, file transfer, Open Ondemand portals

Using the shell🔗

- At the prompt ($), simply type the command (optionally followed by arguments to the command). E.g:

$ ls -l

...a list of files...

- The working directory is normally the "current point of focus" for many commands

- A few basic shell commands are

ls, list files in working directorypwd, print current working directory ("where am I")cd directory_name, change working directorycp src_file dst_file, copy a filerm file, delete a file (there is no undelete!)mv nameA nameB, rename nameA to nameBmkdir dirname, create a directory See alsogrep, find, less, chgrp, chmod

man-pages🔗

- man provides documentation for most of the commands available on the system, e.g.

- man ssh, to show the man-page for the ssh command

- man -k word, to list available man-pages containing word in the title

- man man, to show the man-page for the man command

- To navigate within the man-pages (same as less) space - to scroll down one screen page

b- to scroll up one screen pageq- to quit from the current man page/- search (type in word, enter)n- find next search match (N for reverse)h- to get further help (how to search the man page etc)

Modules🔗

- Almost all software is available only after loading corresponding modules https://www.c3se.chalmers.se/documentation/modules

- To load one or more modules, use the command

module load module-name [module-name ...] - Loading a module expands your current

PATH,LD_LIBRARY_PATH,PYTHONPATHetc. making the software available. - Example:

$ mathematica --version

-bash: mathematica: command not found

$ module load Mathematica/13.0.0

$ mathematica --version

13.0

- Don't load modules in your

~/.bashrc. You will break things like the desktop. Load modules in each jobscript to make them self contained, otherwise it's impossible for us to offer support.

Modules (continued)🔗

- Toolchains

- Compilers, C, C++, and FORTRAN compilers such as ifort, gcc, clang

- MPI-implementations, such as Intel-mpi, OpenMPI

- Math Kernel Libraries, optimised BLAS and FFT (and more) e.g. mkl

- Large number of software modules; Python (+a lot of addons such as NumPy, SciPy etc), ANSYS, COMSOL, Gaussian, Gromacs, MATLAB, OpenFoam, R, StarCCM, etc.

- module load module-name - load a module

- module list - list currently loaded modules

- module keyword string - search keyword string in modules (e.g. extensions)

- module spider module-name - search for module

- module purge - unloads all current modules

- module show module-name - shows the module content

- module avail - show available modules (for currently loaded toolchain only)

- module unload module-name - unloads a module

Modules (continued)🔗

- A lot of software available in modules.

- Commercial software and libraries; MATLAB, Mathematica, Schrodinger, CUDA, Nsight Compute and much more.

- Tools, compilers, MPI, math libraries, etc.

- Major version update of all software versions is done twice yearly

- Overview of recent toolchains

- Mixing toolchains versions will not work

- Popular top level applications such as TensorFlow and PyTorch may be updated within a single toolchain version.

Software - Python🔗

- We install the fundamental Python packages for HPC, such as NumPy, SciPy, PyTorch, optimised for our systems

- We can also install Python packages if there will be several users.

- We provide

virtualenv,apptainer,conda(least preferable) so you can install your own Python packages locally. https://www.c3se.chalmers.se/documentation/applications/python/ - Avoid using the old OS installed Python.

- Avoid installing python packages directly into home directory with

pip install --user. They will leak into containers and other environments, and will quickly eat up your quota.

Software - Installing software🔗

- You are ultimately responsible for having the software you need

- You are also responsible for having any required licenses

- We're happy to help you installing software - ask us if you're unsure of what compiler or maths library to use, for example.

- We can also install software centrally, if there will be multiple users, or if the software requires special permissions. You must supply us with the installation material (if not openly available).

- If the software already has configurations in EasyBuild then installations can be very quick.

- You can run your own containers.

Software - Building software🔗

- Use modules for build tools things

- buildenv modules, e.g.

buildenv/default-foss-2023a-CUDA-12.1.1provides a build environment with GCC, OpenMPI, OpenBLAS, CUDA - many important tools:

CMake,Autotools,git, ... - and much more

Python,Perl,Rust, ...

- buildenv modules, e.g.

- You can link against libraries from the module tree. Modules set

LIBRARY_PATHand other environment variables and more which can often be automatically picked up by good build systems. - Poor build tools can often be "nudged" to find the libraries with configuration flags like

--with-python=$EBROOTPYTHON - You can only install software in your allocated disk spaces (nice build tools allows you to specify a

--prefix=path_to_local_install)- Many "installation instructions" online falsely suggest you should use

sudoto perform steps. They are wrong.

- Many "installation instructions" online falsely suggest you should use

- Need a common dependency? You can request we install it as another module.

Software - Installing binary (pre-compiled) software🔗

- Common problem is that software requires a newer glibc version. This is tied to the OS and can't be upgraded.

- You can use Apptainer (Singularity) container to wrap this for your software.

- Make sure to use binaries that are compiled optimised for the hardware.

- Alvis and Vera support up to AVX512.

- Difference can be huge. Example: Compared to our optimised NumPy builds, a generic x86 version is up to ~9x slower on Vera.

- Support for hardware like the Infiniband network and GPUDirect can also be critical for performance.

- AVX512 (2015) > AVX2 (2008) > AVX (2011) > SSE (1999-2006) > Generic instructions (<1996).

- Difference between Skylake and upcoming Icelake relatively are small.

- Software optimized for Skylake will also run on Icelake, but not the other way around as Icelake introduced a few new CPU instructions.

Building containers🔗

- Simply

apptainer build my.sif my.deffrom a given definition file, e.g:

Bootstrap: docker

From: continuumio/miniconda3:4.12.0

%files

requirements.txt

%post

/opt/conda/bin/conda install -y --file requirements.txt

- You can boostrap much faster from existing containers (even your own) if you want to add things:

Bootstrap: localimage

From: path/to/existing/container.sif

%post

/opt/conda/bin/conda install -y matplotlib

- Final image is small portable single file.

- More things can be added to the definition file e.g.

%environment

Storing data🔗

- Home directories

$HOME = /cephyr/users/<CID>/Vera$HOME = /cephyr/users/<CID>/Alvis

- The home directory is backed up every night

- We use quota to limit storage use

- To check your current quota on all your active storage areas, run

C3SE_quota - Quota limits (Cephyr):

- User home directory (

/cephyr/users/<CID>/)- 30GB, 60k files

- Use

where-are-my-filesto find file quota on Cephyr.

- User home directory (

- https://www.c3se.chalmers.se/documentation/filesystem/

Storing data🔗

- If you need to store more data, you can apply for a storage project

$SLURM_SUBMIT_DIRis defined in jobs, and points to where you submitted your job.- Try to avoid lots of small files: sqlite or HDF5 are easy to use!

- Data deletion policy for storage projects.

- See NAISS UA for user data deletion.

Storing data - TMPDIR🔗

$TMPDIR: local scratch disk on the node(s) of your jobs. Automatically deleted when the job has finished.- When should you use

$TMPDIR?- The only good reason NOT to use

$TMPDIRis if your program only loads data in one read operation, processes it, and writes the output.

- The only good reason NOT to use

- It is crucial that you use

$TMPDIRfor jobs that perform intensive file I/O - If you're unsure what your program does: investigate it, or use

$TMPDIR! - Using

/cephyr/...or/mimer/...means the network-attached permanent storage is used. - Using

sbatch --gres=ptmpdir:1you get a distributed, parallel$TMPDIRacross all nodes in your job. Always recommended for multi-node jobs that use $TMPDIR.

Your projects🔗

projinfolists your projects and current usage.projinfo -Dbreaks down usage day-by-day (up to 30 days back).

Project Used[h] Allocated[h] Queue

User

-------------------------------------------------------

C3SE2017-1-8 15227.88* 10000 vera

razanica 10807.00*

kjellm 2176.64*

robina 2035.88* <-- star means we are over 100% usage

dawu 150.59* which means this project has lowered priority

framby 40.76*

-------------------------------------------------------

C3SE507-15-6 9035.27 28000 mob

knutan 5298.46

robina 3519.03

kjellm 210.91

ohmanm 4.84

Running jobs🔗

- On compute clusters jobs must be submitted to a queuing system that starts your jobs on the compute nodes:

sbatch <arguments> script.sh

- Simulations must NOT run on the login nodes. Prepare your work on the front-end, and then submit it to the cluster

- A job is described by a script (script.sh above) that is passed on to the queuing system by the sbatch command

- Arguments to the queue system can be given in the

script.shas well as on the command line - Maximum wall time is 7 days (we might extend it manually in rare occasions)

- Anything long running should use checkpointing of some sort to save partial results.

- When you allocate less than a full node, you are assigned a proportional part of the node's memory and local disk space as well.

- See https://www.c3se.chalmers.se/documentation/running_jobs

Running jobs on Vera🔗

-C MEM96requests a 96GB node - 168 (skylake) total (some private)-C MEM192requests a 192GB node - 17 (skylake) total (all private)-C MEM384requests a 384GB node - 7 (skylake) total (5 private, 2 GPU nodes)-C MEM512requests a 512GB node - 20 (icelake) total (6 GPU nodes)-C MEM768requests a 768GB node - 2 (skylake) total-C MEM1024requests a 1024GB node - 9 (icelake) total (3 private)-C MEM2048requests a 2048GB node - 3 (icelake) total (all private)-C 25Grequests a (skylake) node with 25Gbit/s storage and internet connection (nodes without 25G still uses fast Infiniband for access to/cephyr).--gpus-per-node=T4:1requests 1 T4--gpus-per-node=V100:2requests 2 V100--gpus-per-node=A40:4requests 4 A40--gpus-per-node=A100:4requests 4 A100- Don't specify constraints (

-C) unless you know you need them.

Job cost on Vera🔗

- On Vera, jobs cost based on the number of physical cores they allocate, plus

| Type | VRAM | Additional cost |

|---|---|---|

| T4 | 16GB | 6 |

| A40 | 48GB | 16 |

| V100 | 32GB | 20 |

| A100 | 40GB | 48 |

- Example: A job using a full node with a single T4 for 10 hours:

(32 + 6) * 10 = 380core hours - Note: 16, 32, and 64 bit floating point performance differ greatly between these specialized GPUs. Pick the one most efficient for your application.

- Additional running cost is based on the price compared to a CPU node.

- You don't pay any extra for selecting a node with more memory; but you are typically competing for less available hardware.

- GPUs are cheap compared to CPUs in regard to their performance

Running on Icelake vs Skylake🔗

- If specific memory or GPU model, there are only currently one option for the CPU, and the job will automatically end up using that.

- Skylake (32 cores per node):

MEM96,MEM192,MEM384,MEM768,25G, or T4, V100 gpus

- Icelake (64 cores per node):

MEM512,MEM1024,MEM2048, or A40, A100 gpus

- You can explicitly request either node type with

-C SKYLAKEand-C ICELAKE. - If you don't specify any constraint, you will be automatically assigned

-C SKYLAKE(for now). - You can use

-C "SKYLAKE|ICELAKE"to opt into using either node type. Note the differing core count, so you want to use this with care!

Viewing available nodes🔗

jobinfo -p veracommand shows the current state of nodes in the main partition

Node type usage on main partition:

TYPE ALLOCATED IDLE OFFLINE TOTAL

ICELAKE,MEM1024 4 2 0 6

ICELAKE,MEM512 13 0 0 13

SKYLAKE,MEM192 17 0 0 17

SKYLAKE,MEM768 2 0 0 2

SKYLAKE,MEM96,25G 16 0 4 20

SKYLAKE,MEM96 172 0 4 176

Total GPU usage:

TYPE ALLOCATED IDLE OFFLINE TOTAL

A100 8 4 0 12

V100 4 4 0 8

T4 7 1 0 8

A40 0 16 0 16

Vera script example🔗

#!/bin/bash

#SBATCH -A C3SE2021-2-3 -p vera

#SBATCH -C SKYLAKE

#SBATCH -n 64

#SBATCH -t 2-00:00:00

#SBATCH --gres=ptmpdir:1

module load ABAQUS/2022-hotfix-2223 intel/2022a

cp train_break.inp $TMPDIR

cd $TMPDIR

abaqus cpus=$SLURM_NTASKS mp_mode=mpi job=train_break

cp train_break.odb $SLURM_SUBMIT_DIR

Vera script example🔗

#!/bin/bash

#SBATCH -A C3SE2021-2-3 -p vera

#SBATCH -t 2-00:00:00

#SBATCH --gpus-per-node=V100:1

unzip many_tiny_files_dataset.zip -d $TMPDIR/

apptainer exec --nv ~/tensorflow-2.1.0.sif python trainer.py --training_input=$TMPDIR/

Vera script example🔗

- Submitted with

sbatch --array=0-99 wind_turbine.sh

#!/bin/bash

#SBATCH -A C3SE2021-2-3

#SBATCH -n 1

#SBATCH -C "ICELAKE|SKYLAKE"

#SBATCH -t 15:00:00

#SBATCH --mail-user=zapp.brannigan@chalmers.se --mail-type=end

module load MATLAB

cp wind_load_$SLURM_ARRAY_TASK_ID.mat $TMPDIR/wind_load.mat

cp wind_turbine.m $TMPDIR

cd $TMPDIR

RunMatlab.sh -f wind_turbine.m

cp out.mat $SLURM_SUBMIT_DIR/out_$SLURM_ARRAY_TASK_ID.mat

- Environment variables like

$SLURM_ARRAY_TASK_IDcan also be accessed from within all programming languages, e.g:

array_id = getenv('SLURM_ARRAY_TASK_ID'); % matlab

array_id = os.getenv('SLURM_ARRAY_TASK_ID') # python

Vera script example🔗

- Submitted with

sbatch --array=0-50:5 diffusion.sh

#!/bin/bash

#SBATCH -A C3SE2021-2-3

#SBATCH -C ICELAKE

#SBATCH -n 128 -t 2-00:00:00

module load intel/2022a

# Set up new folder, copy the input file there

temperature=$SLURM_ARRAY_TASK_ID

dir=temp_$temperature

mkdir $dir; cd $dir

cp $HOME/base_input.in input.in

# Set the temperature in the input file:

sed -i 's/TEMPERATURE_PLACEHOLDER/$temperature' input.in

mpirun $HOME/software/my_md_tool -f input.in

Here, the array index is used directly as input. If it turns out that 50 degrees was insufficient, then we could do another run:

sbatch --array=55-80:5 diffusion.sh

Vera script example🔗

Submitted with: sbatch run_oofem.sh

#!/bin/bash

#SBATCH -A C3SE507-15-6 -p mob

#SBATCH --ntasks-per-node=32 -N 3

#SBATCH -J residual_stress

#SBATCH -t 6-00:00:00

#SBATCH --gres=ptmpdir:1

module load PETSc

cp $SLURM_JOB_NAME.in $TMPDIR

cd $TMPDIR

mkdir $SLURM_SUBMIT_DIR/$SLURM_JOB_NAME

while sleep 1h; do

rsync -a *.vtu $SLURM_SUBMIT_DIR/$SLURM_JOB_NAME

done &

LOOPPID=$!

mpirun $HOME/bin/oofem -p -f "$SLURM_JOB_NAME.in"

kill $LOOPPID

rsync -a *.vtu $SLURM_SUBMIT_DIR/$SLURM_JOBNAME/

GPU example🔗

#!/bin/bash

#SBATCH -A C3SE2021-2-3

#SBATCH -t 2-00:00:00

#SBATCH --gpu-per-node=A40:2

apptainer exec --nv tensorflow-2.1.0.sif python cat_recognizer.py

Interactive use🔗

You are allowed to use the Thinlinc machines for light/moderate tasks that require interactive input. If you need all cores, or load for a extended duration, you must run on the nodes:

srun -A C3SE2021-2-3 -n 4 -t 00:30:00 --pty bash -is

you are eventually presented with a shell on the node:

[ohmanm@vera12-3]#

- Useful for debugging a job-script, application problems, extremely long compilations.

- Not useful when there is a long queue (you still have to wait), but can be used with private partitions.

sruninteractive jobs will be aborted if the login node needs to be rebooted or loss of internet connectivity. Prefer always using the portal.

Job command overview🔗

sbatch: submit batch jobssrun: submit interactive jobsjobinfo,squeue: view the job-queue and the state of jobs in queuescontrol show job <jobid>: show details about job, including reasons why it's pendingsprio: show all your pending jobs and their priorityscancel: cancel a running or pending jobsinfo: show status for the partitions (queues): how many nodes are free, how many are down, busy, etc.sacct: show scheduling information about past jobsprojinfo: show the projects you belong to, including monthly allocation and usage- For details, refer to the -h flag, man pages, or google!

Job monitoring🔗

- Why am I queued?

jobinfo -u $USER:- Priority: Waiting for other queued jobs with higher priority.

- Resources: Waiting for sufficient resources to be free.

- AssocGrpBillingRunMinutes: We limit how much you can have running at once (<= 100% of 30-day allocation * 0.5^x where x is the number of stars in

projinfo).

- You can log on to the nodes that your job got allocated by using ssh (from the login node) as long as your job is running. There you can check what your job is doing, using normal Linux commands - ps, top, etc.

- top will show you how much CPU your process is using, how much memory, and more. Tip: press 'H' to make top show all threads separately, for multithreaded programs

- iotop can show you how much your processes are reading and writing on disk

- Performance benchmarking with e.g. Nvidia Nsight compute

- Debugging with gdb, Address Sanitizer, or Valgrind

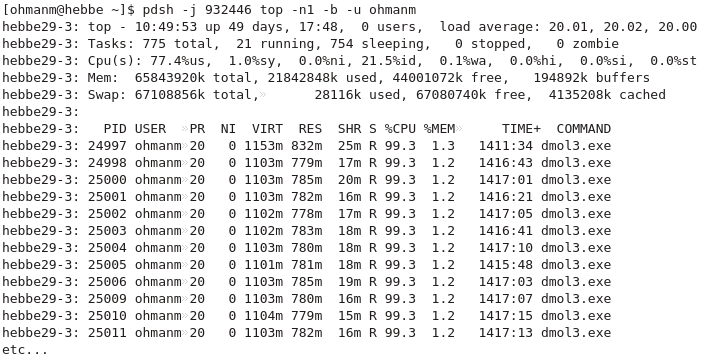

Job monitoring🔗

- Running top on your job's nodes:

System monitoring🔗

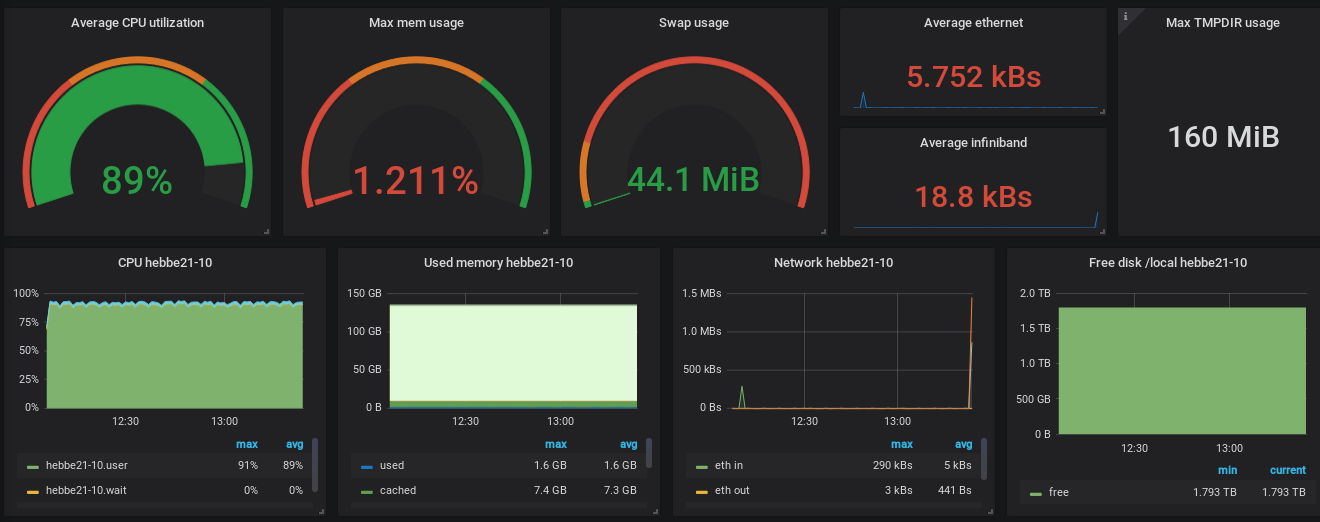

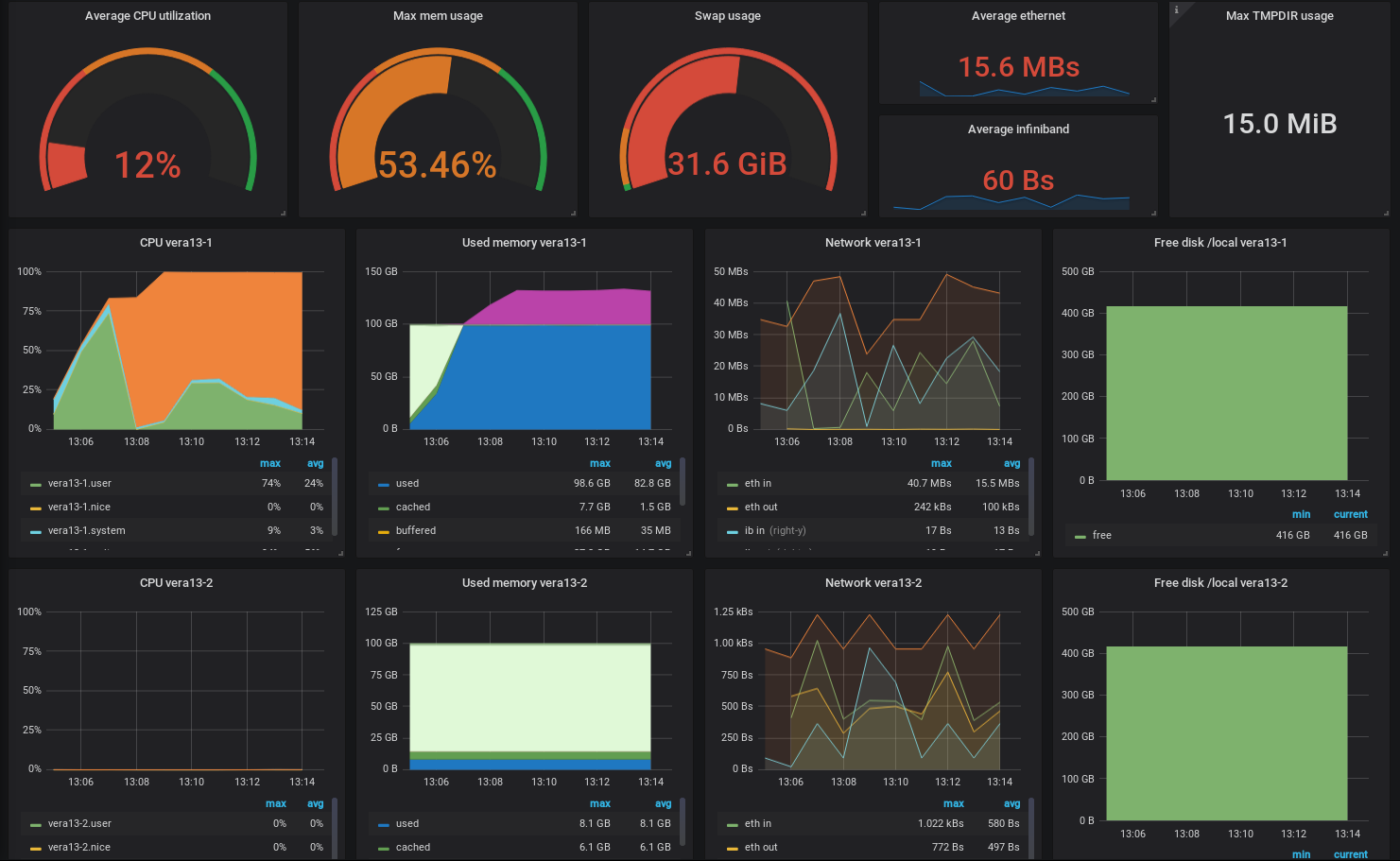

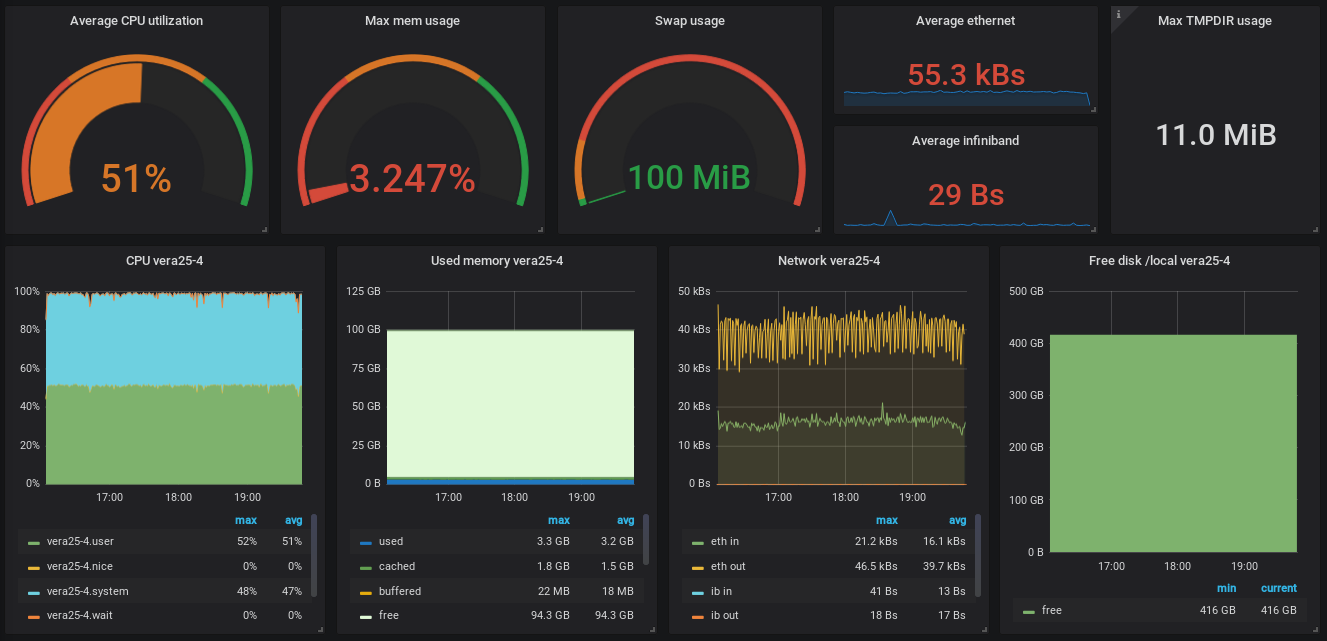

job_stats.py JOBIDis essential.- Check e.g. memory usage, user, system, and wait CPU utilisation, disk usage, etc

sinfo -Rlcommand shows how many nodes are down for repair.

System monitoring🔗

- The ideal job, high CPU utilisation and no disk I/O

System monitoring🔗

- Looks like something tried to use 2 nodes incorrectly.

- Requested for 64 cores but only used half of them. One node was just idling.

System monitoring🔗

- Extremely inefficient I/O. All cores are waiting for each other finishing writing most of time (system cpu usage).

Profiling🔗

- With the right tools you can easily dive into where your code bottlenecks are, we recommend:

- TensorFlow: TensorBoard

- PyTorch:

torch.profiler(possibly with TensorBoard) - Python: Scalene

- Compiled CPU or GPU code: NVIDIA Nsight Systems

- MATLAB: Built in profiler

- Tools can be used interactively on compute nodes with OpenOndemand portals!

Things to keep in mind🔗

- Never run (big or long) jobs on the login node! If you do, we will kill the processes. If you keep doing it, we'll throw you out and block you from logging in for a while! Prepare your job, do tests and check that everything's OK before submitting the job, but don't run the job there!

- The Open Ondemand portals allow interactive desktop and web apps directly on the compute nodes. Use this for heavy interactive work.

- If your home dir runs out of quota or you put to much experimental stuff in your

.bashrcfile, expect things like the desktop session to break. Many support tickets are answered by simply clearing these out. - Keep an eye on what's going on - use normal Linux tools on the login node and on the allocated nodes to check CPU, memory and network usage, etc. Especially for new jobscripts/codes! Do check

job_stats.py! - Think about what you do - if you by mistake copy very large files back and forth you can slow the storage servers or network to a crawl

Getting support🔗

- We ask all students to pass Introduction to computer clusters but all users who whishes can attend this online self learning course.

- Students should first speak to their supervisor for support

- We provide support to our users, but not for any and all problems

- We can help you with software installation issues, and recommend compiler flags etc. for optimal performance

- We can install software system-wide if there are many users who need it - but not for one user (unless the installation is simple)

- We don't support your application software or help debugging your code/model or prepare your input files

Getting support🔗

- Staff are available in our offices, to help with those things that are hard to put into a support request email (book a time in advance please)

- Origo building - Fysikgården 4, one floor up, ring the bell

- We also offer advanced support for things like performance optimisation, advanced help with software development tools or debuggers, workflow automation through scripting, etc.

Getting support - support requests🔗

- If you run into trouble, first figure out what seems to go wrong. Use the following as a checklist:

- make sure you simply aren't over disk quota with

C3SE_quota - something wrong with your job script or input file?

- does your simulation diverge?

- is there a bug in the program?

- any error messages? Look in your manuals, and use Google!

- check the node health: Did you over-allocate memory until Linux killed the program?

- Try to isolate the problem - does it go away if you run a smaller job? does it go away if you use your home directory instead of the local disk on the node?

- Try to create a test case - the smallest and simplest possible case that reproduces the problem

Getting support - error reports🔗

- In order to help you, we need as much and as good information as possible:

- What's the job-ID of the failing job?

- What working directory and what job-script?

- What software are you using?

- What's happening - especially error messages?

- Did this work before, or has it never worked?

- Do you have a minimal example?

- No need to attach files; just point us to a directory on the system.

- Where are the files you've used - scripts, logs etc?

- Look at our Getting support page

In summary🔗

- Our web page is https://www.c3se.chalmers.se

- Read up how to use the file system

- Read up on the module system and available software

- Learn a bit of Linux if you don't already know it - no need to be a guru, but you should feel comfortable working in it

- Play around with the system, and ask us if you have questions

- Please use the SUPR support form - it provides additional automatic project and user information that we need. Please always prefer this to sending emails directly.

Outlook🔗

- We have more GPUs, but are still often not utilized that much.

- We are expanding Vera with upcoming 44 Icelake nodes. Skylake nodes will retire by the end of this year